Roughly twenty years ago, I installed a monitoring tool across an IT estate for the first time. It was called Zenoss, an open source infrastructure monitoring platform that had just launched, and it was genuinely impressive. Auto-discovery, event management, alerting, a clean web interface. Free. Extensible.

We went from almost no monitoring to full-blown, estate-wide visibility practically overnight. And it nearly buried us.

The First Time

The assumption was reasonable. We had limited visibility of our infrastructure. Zenoss would give us proactive monitoring, a way to keep abreast of the environment, spot issues early, and deal with them before they became incidents. A dashboard we could watch. A system that would tell us when something needed attention.

What nobody told us, and what I certainly hadn't thought through, was what happens when you point a capable monitoring tool at an entire estate that's never been monitored before.

Everything lights up.

Every misconfigured service. Every disk creeping toward capacity. Every intermittent network blip that had been happening silently for months. Every threshold breach on systems nobody had looked at in years. All of it, surfaced at once, firing alerts into inboxes and dashboards that were designed for a calm, well-tuned environment, not an avalanche.

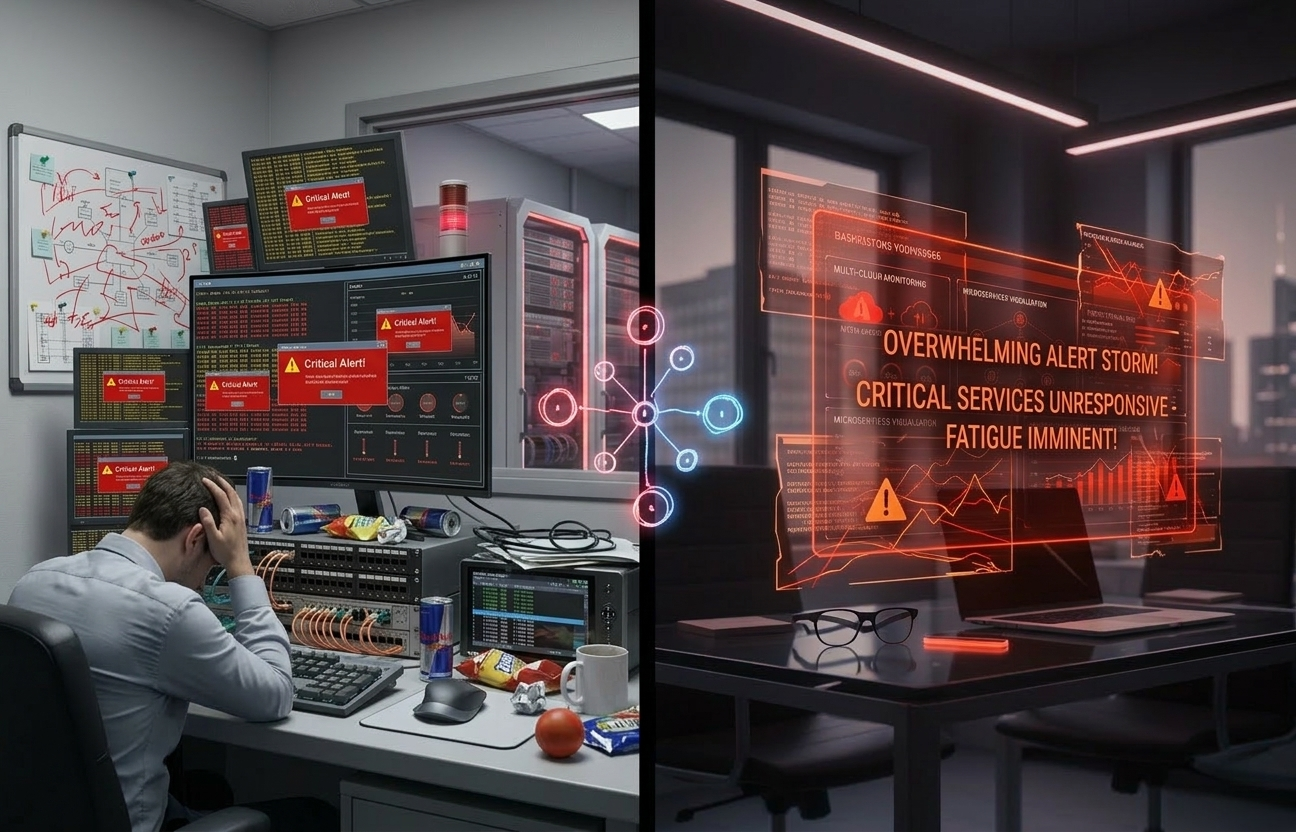

The team was swamped. Not with incidents, with noise. Hundreds of alerts a day, most of them technically valid but practically meaningless without context or prioritisation. There was no triage framework. No classification of what was critical versus informational. No agreed process for who owned what, or which alerts warranted action and which could be acknowledged and suppressed.

We had all the data. We had no way to act on it.

The irony was painful. We'd deployed monitoring to be more proactive, and instead we'd created a firehose that made the team more reactive than they'd ever been. People started ignoring alerts, not out of laziness, but out of self-preservation. When everything is urgent, nothing is. The tool was working perfectly. The problem was entirely ours.

The Lesson That Should Have Stuck

In hindsight, the mistake was obvious. We'd thought about the technology. We hadn't thought about the people or the process.

Nobody had asked: how will the team actually consume this information? Who triages? What's the escalation path? What gets suppressed? What gets a ticket? What volume of alerts can a team of this size realistically process in a day without drowning?

The tool wasn't the problem. Zenoss was, and remained for years, a genuinely capable platform. Its open source edition was eventually discontinued, and the platform itself was later acquired by Virtana and folded into their commercial observability portfolio. The technology was sound.

The gap was everything around it. The operating model. The triage process. The cultural readiness. The simple human question of what happens when you give a team visibility they've never had before, without giving them a framework for dealing with what they see.

I learned that lesson the hard way. And I assumed it was one of those formative experiences you carry with you, a mistake you make once, understand deeply, and never repeat.

I was half right. I never repeated it. But I watched someone else repeat it almost exactly.

The Second Time

Fast-forward to more recently. Different organisation. Different decade. Different tool, this time ScienceLogic, a commercial monitoring and AIOps platform. Same ambition: deploy comprehensive monitoring across the estate. Same promise: visibility, proactive alerting, operational intelligence.

I was involved in the implementation, and I could see it coming from the first planning session. The focus was entirely on coverage. Which devices. Which networks. Which applications. How quickly can we get everything monitored? The conversation was technical, thorough, and completely one-dimensional.

So I asked the question.

“Have you thought about how the teams are going to react to this? Functionally, as teams, how are they going to handle the volume of alerts when this goes live across the whole estate?”

They looked at me as if I had two heads.

It wasn't hostility. It was genuine incomprehension. The assumption, the same assumption I'd made twenty years earlier, was that monitoring is inherently good. More visibility is better. The tool will surface problems. The teams will fix them. What's to think about?

And guess what happened.

Alert fatigue. Teams inundated. Dashboards full of noise. Critical alerts lost in a sea of warnings and notifications because the volume was unmanageable. The same pattern, playing out in a different decade with a different tool in a different organisation, producing the same result.

Déjà vu doesn't begin to cover it.

Why This Keeps Happening

It keeps happening because nobody teaches this. Not the vendors. Not the implementation partners. Not the certification courses. Not the sales engineers doing the demos.

Monitoring tools are sold on capability. Look at this dashboard. Look at this auto-discovery. Look at this correlation engine. And the capability is real, these are genuinely powerful platforms. But the implicit message is that deploying the tool is the hard part, and once it's in, the value follows automatically.

It doesn't.

Deploying a monitoring tool without an operational model for consuming its output is like installing a fire alarm system in a building where nobody has been told what to do when the alarm sounds. The system works. The alarm fires. Everyone stands around wondering whose job it is to respond.

The operational model is the unsexy part. It's the bit that doesn't appear in vendor slide decks or product demos. But it's the bit that determines whether the tool becomes a genuine operational asset or an expensive source of background noise that everyone learns to ignore.

That model needs to answer questions like:

What's worth alerting on? Not everything that can be monitored should generate an alert. A disk at 70% capacity is useful information. It's not an alert. An alert should demand attention and imply action. If it doesn't, it's noise, and noise erodes trust in the system faster than anything else.

Who owns what? If an alert fires and three teams could theoretically respond, nobody will. Clear ownership by service, by system, by category, agreed in advance, not negotiated in the moment.

What's the triage process? When a hundred alerts land in an hour, which ones get looked at first? Without a defined triage process and severity model, the answer defaults to whichever alert the on-call engineer happens to see first.

What's the suppression strategy? Planned maintenance, known issues, transient conditions, all of these generate valid alerts that don't require action. If you haven't built suppression and maintenance windows into your operational model, you're artificially inflating the noise floor.

How do you tune over time? A monitoring deployment isn't a project with an end date. It's a living system that needs continuous refinement. Thresholds need adjusting. New alerts need adding. Old ones need retiring. If nobody owns the tuning process, the system degrades, slowly, then quickly.

The AI Promise

This is where it gets interesting, because the industry knows alert fatigue is a problem. It's been a known problem for years. And the current answer, the one every vendor is racing toward, is AI.

AIOps platforms promise to solve the alert fatigue problem through intelligent correlation, noise reduction, anomaly detection, and automated root cause analysis. Instead of five hundred alerts about related symptoms, you get one correlated incident with a probable cause. Instead of static thresholds that fire at the same level regardless of context, you get dynamic baselines that learn what's normal for each system.

Zenoss itself went this way, the platform that started as a free, open source tool evolved into an AI-driven commercial product before eventually being acquired by Virtana and folded into their observability portfolio. The trajectory tells you where the market thinks the answer lies.

And to be fair, the technology is getting genuinely better at this. Machine learning models that can correlate thousands of events into a handful of actionable incidents. Natural language processing that can summarise what's happening in plain English. Predictive analytics that can flag problems before they generate alerts at all.

But here's the question I can't shake, informed by twenty years of watching the same pattern repeat: is AI-driven prioritisation the answer, or is it another sophisticated tool that will underperform if the people and process fundamentals aren't in place?

I suspect the answer is both. AI genuinely can reduce noise. It can correlate events that humans would miss. It can learn baselines that would take months to configure manually. But it still needs someone to define what “actionable” means in your context. It still needs clear ownership models. It still needs humans who understand the environment well enough to tell the AI when it's wrong.

The risk is that AI becomes the new version of the same assumption: deploy the tool, and the problem solves itself. It won't. It'll just be a more expensive version of the same disappointment if the operational foundations aren't there.

What I'd Tell Anyone Deploying Monitoring Today

Start with the operating model, not the technology. Before you choose a platform, answer the human questions. Who responds? How do they triage? What volume can they realistically handle? What does “actionable” mean in your environment? Get those answers agreed and documented before a single agent is installed.

Roll out incrementally. Don't monitor everything on day one. Start with the systems that matter most, tune the alerts until the signal-to-noise ratio is manageable, then expand. The urge to get full coverage quickly is understandable. Resist it. Coverage without consumability is just expensive noise.

Treat tuning as a permanent function, not a project phase. The monitoring deployment is never “done.” Assign ownership for ongoing threshold review, alert refinement, and suppression management. If nobody owns it, it will rot.

And challenge the assumption that more data is always better. It isn't. Better data is better. The right alert, to the right person, at the right time, with enough context to act, that's the goal. And once you've earned that clarity, once you genuinely understand what's actionable and what the right response looks like, ask the next question: does a human even need to be in that loop? Autonomic, self-remediating systems aren't science fiction. If you've done the hard work of understanding the problem and defining the response, letting the platform act on it without waiting for someone to click a button isn't laziness, it's maturity. But get there in that order. Automate what you understand. Skip the understanding and you'll just create a different kind of chaos, faster.

Dealing with a transformation that's gone sideways? I work with organisations as an interim leader to get programmes back on track. Let's have an honest conversation about where you are.

Start a Conversation →

About Paradigm-ICT

About Paradigm-ICT

Paradigm-ICT is an interim IT transformation consultancy specialising in programme recovery, complex transition delivery, and pragmatic technology enablement across manufacturing, utilities, retail, and financial services.

Founded on 30 years of hands-on operational experience — starting in business operations, not IT — we bring a business-first perspective to technology leadership that most consultancies can’t.

Learn more →“I need something that actually works the way I work.”

“The CRMs that fail are the ones built for reporting, not for doing.”

“If the people doing the work won’t use it on a bad day, it’s not a tool — it’s a chore.”